Russian efforts to influence U.S. politics and sway public opinion were consistent and, as far as engaging with target audiences, largely successful, according to a report from Oxford’s Computational Propaganda Project published today. Based on data provided to Congress by Facebook, Instagram, Google and Twitter, the study paints a portrait of the years-long campaign that’s less than flattering to the companies.

The report, which you can read here, was published today but given to some outlets over the weekend; it summarizes the work of the Internet Research Agency, Moscow’s online influence factory and troll farm. The data cover various periods for different companies, but 2016 and 2017 showed by far the most activity.

A clearer picture

If you’ve only checked into this narrative occasionally during the last couple of years, the Comprop report is a great way to get a bird’s-eye view of the whole thing, with no “we take this very seriously” palaver interrupting the facts.

If you’ve been following the story closely, the value of the report is mostly in deriving specifics and some new statistics from the data, which Oxford researchers were provided some seven months ago for analysis. The numbers, predictably, all seem to be a bit higher or more damning than those provided by the companies themselves in their voluntary reports and carefully practiced testimony.

Previous estimates have focused on the rather nebulous metric of “encountering” or “seeing” IRA content put on these social metrics. This had the dual effect of increasing the affected number — to over 100 million on Facebook alone — but “seeing” could easily be downplayed in importance; after all, how many things do you “see” on the internet every day?

The Oxford researchers better quantify the engagement, on Facebook first, with more specific and consequential numbers. For instance, in 2016 and 2017, nearly 30 million people on Facebook actually shared Russian propaganda content, with similar numbers of likes garnered, and millions of comments generated.

Note that these aren’t ads that Russian shell companies were paying to shove into your timeline — these were pages and groups with thousands of users on board who actively engaged with and spread posts, memes and disinformation on captive news sites linked to by the propaganda accounts.

The content itself was, of course, carefully curated to touch on a number of divisive issues: immigration, gun control, race relations and so on. Many different groups (i.e. black Americans, conservatives, Muslims, LGBT communities) were targeted; all generated significant engagement, as this breakdown of the above stats shows:

Although the targeted communities were surprisingly diverse, the intent was highly focused: stoke partisan divisions, suppress left-leaning voters and activate right-leaning ones.

Black voters in particular were a popular target across all platforms, and a great deal of content was posted both to keep racial tensions high and to interfere with their actual voting. Memes were posted suggesting followers withhold their votes, or with deliberately incorrect instructions on how to vote. These efforts were among the most numerous and popular of the IRA’s campaign; it’s difficult to judge their effectiveness, but certainly they had reach.

Examples of posts targeting black Americans.

In a statement, Facebook said that it was cooperating with officials and that “Congress and the intelligence community are best placed to use the information we and others provide to determine the political motivations of actors like the Internet Research Agency.” It also noted that it has “made progress in helping prevent interference on our platforms during elections, strengthened our policies against voter suppression ahead of the 2018 midterms, and funded independent research on the impact of social media on democracy.”

Instagram on the rise

Based on the narrative thus far, one might expect that Facebook — being the focus for much of it — was the biggest platform for this propaganda, and that it would have peaked around the 2016 election, when the evident goal of helping Donald Trump get elected had been accomplished.

In fact Instagram was receiving as much or more content than Facebook, and it was being engaged with on a similar scale. Previous reports disclosed that around 120,000 IRA-related posts on Instagram had reached several million people in the run-up to the election. The Oxford researchers conclude, however, that 40 accounts received in total some 185 million likes and 4 million comments during the period covered by the data (2015-2017).

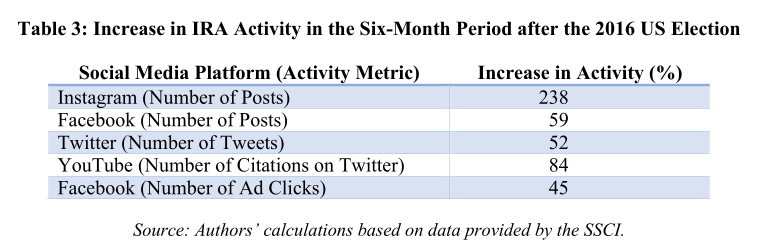

A partial explanation for these rather high numbers may be that, also counter to the most obvious narrative, IRA posting in fact increased following the election — for all platforms, but particularly on Instagram.

IRA-related Instagram posts jumped from an average of 2,611 per month in 2016 to 5,956 in 2017; note that the numbers don’t match the above table exactly because the time periods differ slightly.

Twitter posts, while extremely numerous, are quite steady at just under 60,000 per month, totaling around 73 million engagements over the period studied. To be perfectly frank, this kind of voluminous bot and sock puppet activity is so commonplace on Twitter, and the company seems to have done so little to thwart it, that it hardly bears mentioning. But it was certainly there, and often reused existing bot nets that previously had chimed in on politics elsewhere and in other languages.

In a statement, Twitter said that it has “made significant strides since 2016 to counter manipulation of our service, including our release of additional data in October related to previously disclosed activities to enable further independent academic research and investigation.”

Google too is somewhat hard to find in the report, though not necessarily because it has a handle on Russian influence on its platforms. Oxford’s researchers complain that Google and YouTube have been not just stingy, but appear to have actively attempted to stymie analysis.

Google chose to supply the Senate committee with data in a non-machine-readable format. The evidence that the IRA had bought ads on Google was provided as images of ad text and in PDF format whose pages displayed copies of information previously organized in spreadsheets. This means that Google could have provided the useable ad text and spreadsheets—in a standard machine- readable file format, such as CSV or JSON, that would be useful to data scientists—but chose to turn them into images and PDFs as if the material would all be printed out on paper.

This forced the researchers to collect their own data via citations and mentions of YouTube content. As a consequence, their conclusions are limited. Generally speaking, when a tech company does this, it means that the data they could provide would tell a story they don’t want heard.

For instance, one interesting point brought up by a second report published today, by New Knowledge, concerns the 1,108 videos uploaded by IRA-linked accounts on YouTube. These videos, a Google statement explained, “were not targeted to the U.S. or to any particular sector of the U.S. population.”

In fact, all but a few dozen of these videos concerned police brutality and Black Lives Matter, which as you’ll recall were among the most popular topics on the other platforms. Seems reasonable to expect that this extremely narrow targeting would have been mentioned by YouTube in some way. Unfortunately it was left to be discovered by a third party and gives one an idea of just how far a statement from the company can be trusted. (Google did not immediately respond to a request for comment.)

Desperately seeking transparency

In its conclusion, the Oxford researchers — Philip N. Howard, Bharath Ganesh and Dimitra Liotsiou — point out that although the Russian propaganda efforts were (and remain) disturbingly effective and well organized, the country is not alone in this.

“During 2016 and 2017 we saw significant efforts made by Russia to disrupt elections around the world, but also political parties in these countries spreading disinformation domestically,” they write. “In many democracies it is not even clear that spreading computational propaganda contravenes election laws.”

“It is, however, quite clear that the strategies and techniques used by government cyber troops have an impact,” the report continues, “and that their activities violate the norms of democratic practice… Social media have gone from being the natural infrastructure for sharing collective grievances and coordinating civic engagement, to being a computational tool for social control, manipulated by canny political consultants, and available to politicians in democracies and dictatorships alike.”

Predictably, even social networks’ moderation policies became targets for propagandizing.

Waiting on politicians is, as usual, something of a long shot, and the onus is squarely on the providers of social media and internet services to create an environment in which malicious actors are less likely to thrive.

Specifically, this means that these companies need to embrace researchers and watchdogs in good faith instead of freezing them out in order to protect some internal process or embarrassing misstep.

“Twitter used to provide researchers at major universities with access to several APIs, but has withdrawn this and provides so little information on the sampling of existing APIs that researchers increasingly question its utility for even basic social science,” the researchers point out. “Facebook provides an extremely limited API for the analysis of public pages, but no API for Instagram.” (And we’ve already heard what they think of Google’s submissions.)

If the companies exposed in this report truly take these issues seriously, as they tell us time and again, perhaps they should implement some of these suggestions.

Source : How Russia’s online influence campaign engaged with millions for years